_A Conversation with the Billionaire Philanthropist and the Band of Revolutionary Thinkers Seeking a Better World at the Berggruen Institute_

---------------------------------------------------------------------------------------------------------------------------------------------

The Berggruen Institute might be the most influential organization that you’ve never heard of. I didn’t know who they were either, until one of the architecture blogs I frequent posted renderings of their bonkers new campus, planned to open in the rugged, urban-adjacent scrubland wilds of the Santa Monica Mountains in 2023. In the renderings, the future hearth and home of the institute sprawls over a flattened hilltop. It’s clean and modern, all glass and light and lines, perched over the sprawl of Los Angeles looking like one of the better-designed Bond villain HQs.

Nicolas Berggruen, former investor and hotel-hopping billionaire vagabond, now billionaire philanthropist, gives off a bit of a Bond villain vibe. He’s hyper-intelligent, a bit secretive. He speaks with a cool, even-registered Germanic accent, mingles with the planet’s lever pullers and movers and shakers. He’s at the center of everything while seemingly always out of sight. But Berggruen’s ambitions are not to knock off Knox or hold nations hostage for ransom. Money? He’s got plenty. He’s hoping to do something arguably even crazier—help the bewildered, fractious, splintering brotherhood of man wind its way to a better world through long-term thinking, collaboration, and problem solving in arenas of vital importance, including AI, bioengineering, the future of governance, philosophy, the impact of digital media on democracy, the future of capitalism, the fraught global influence tug of war between East and West, and all the myriad ways these subjects commingle.

It’s a tall order, but if anyone has the team to pull it off, it might be him. Berggruen has assembled many of the world’s greatest living thinkers—politicians, scholars, theorists, philosophers, media titans, and technologists from around the world—to make some headway on these challenges, funding fellowships in the aforementioned areas of study, as well as a one million dollar prize in philosophy (the world’s largest philosophical award) to inspire long-term thinking and innovative solutions.

Founded in 2010 by Berggruen himself, along with co-founder Nathan Gardels, who runs the Institution’s 21st Century Council, and currently centered in downtown LA’s famed Bradbury Building (whose fantastical skylit atrium was fittingly used as a set in _Bladerunner_), the Berggruen Institute has already notched some major achievements. They’ve published books and made policy recommendations for the EU and the state of California. They’ve set up partnerships between elite American and Chinese universities. Fostered dialogue between nations. Started the _World Post_ as a collaboration with the _Huffington Post_ before joining the _Washington Post_ to provide a platform for substantive analysis, opinion, and more globally-focused ideas coverage. But they’re only getting started. In recognition of the five-alarm fires on the horizon and the radical societal shifts currently wracking the global citizenry, the Institute is sharpening its focus and concentrating their efforts on four “Great Transformations,” which include the Future of Democracy, Transformations of the Human, Globalization and Geopolitics, and the Future of Capitalism.

We spoke with three key figures out of many remarkable people at the Berggruen Institute (Nils Gilman, Vice President of Programs, who solidified the aforementioned program areas, and Bing Song, Director of the Berggruen Institute’s ChinaCenter, which plays an important role in the global vision of the Institute, both deserve a mention) to discuss the state of these fields and possible paths forward, as well as the future of the institute as a whole: Nicolas Berggruen, founder of the Institute; Dawn Nakagawa, Executive Vice President and co-director of the Future of Democracy program area, which oversees the Renovating Democracy for the Digital Age project; and Tobias Rees, Director of the Transformations of the Human Program. We present these conversations alongside renderings of the planned new headquarters as well as artwork from Mara Eagle \[see below\], who is working with the institute as part of the Transformations of the Human program to explore the intersections of artistic practice and developments in machine learning and artificial intelligence.

![MARA EAGLE. “IF YOU CAN NOT AVOID, ENJOY THE CHARM OF RAIN CAMPING!; THIS WETLAND IN CALIFORNIA IS HABITAT FOR MIGRATING SNOW GEESE; TUCUMÁN (SPANISH PRONUNCIATION: \[TUKUˈMAN\])” FROM THE SERIES ATLAS OF MAGNILOQUENT DIGITALIA. (2017-2018). COURTESY…](https://assets-global.website-files.com/62ee0bbe0c783a903ecc0ddb/6472b29db3184efb1db4e41c_FLAUNT-MAGAZINE-BREGRUENN-INSTITUTE-4.jpeg)

![MARA EAGLE. “IF YOU CAN NOT AVOID, ENJOY THE CHARM OF RAIN CAMPING!; THIS WETLAND IN CALIFORNIA IS HABITAT FOR MIGRATING SNOW GEESE; TUCUMÁN (SPANISH PRONUNCIATION: [TUKUˈMAN])” FROM THE SERIES ATLAS OF MAGNILOQUENT DIGITALIA. (2017-2018). COURTESY…](https://assets-global.website-files.com/62ee0bbe0c783a903ecc0ddb/6472b29db3184efb1db4e41c_FLAUNT-MAGAZINE-BREGRUENN-INSTITUTE-4.jpeg)

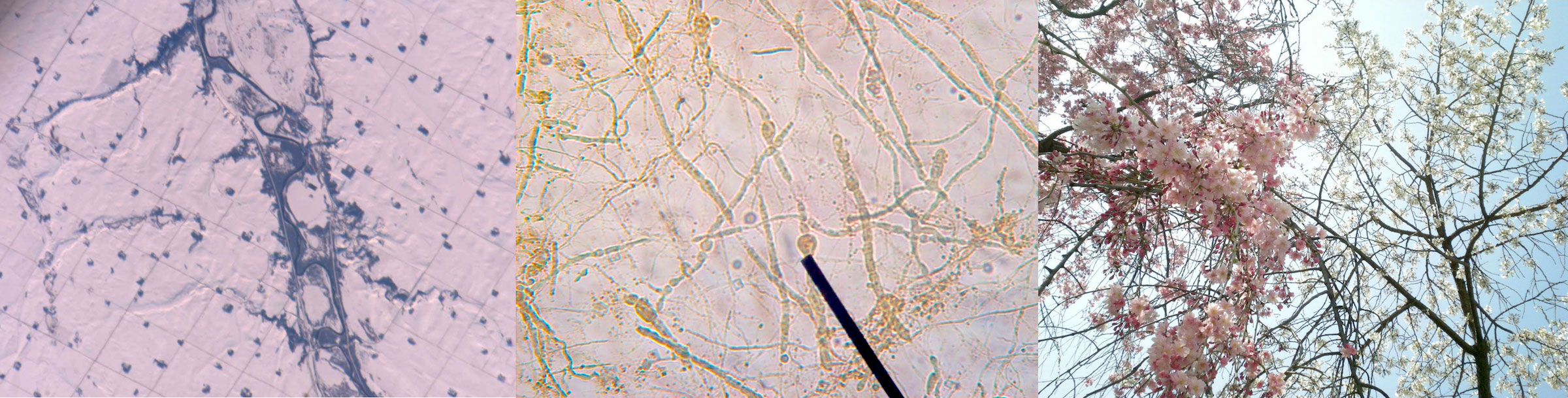

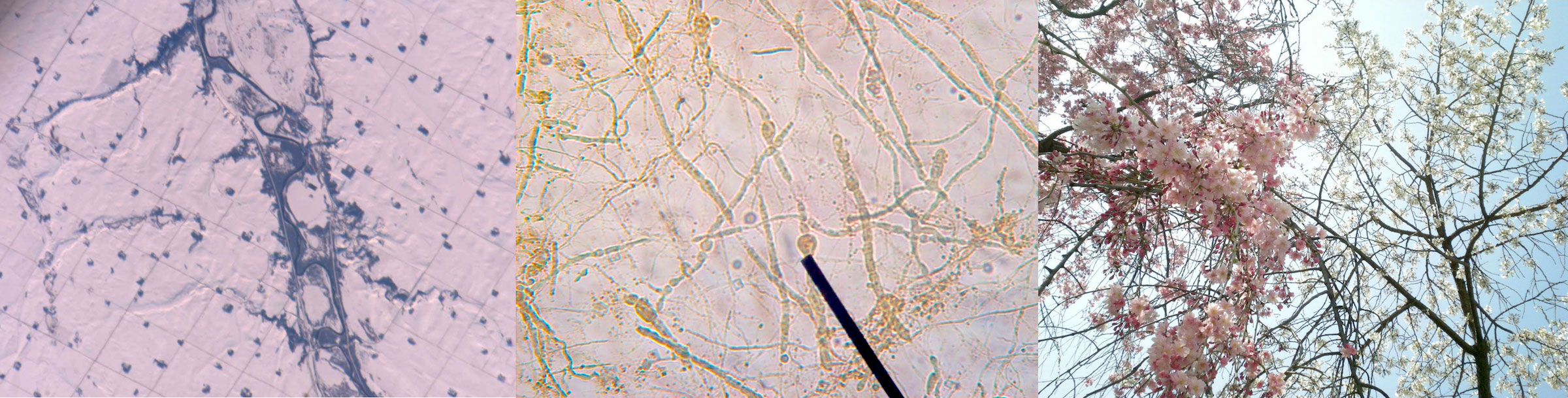

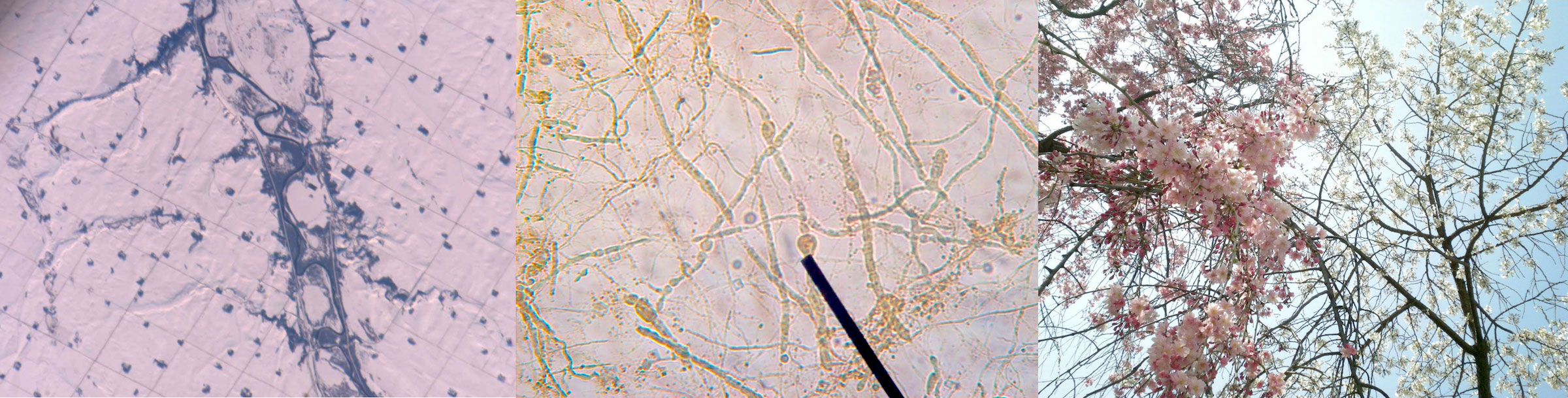

MARA EAGLE. “IF YOU CAN NOT AVOID, ENJOY THE CHARM OF RAIN CAMPING!; THIS WETLAND IN CALIFORNIA IS HABITAT FOR MIGRATING SNOW GEESE; TUCUMÁN (SPANISH PRONUNCIATION: \[TUKUˈMAN\])” FROM THE SERIES ATLAS OF MAGNILOQUENT DIGITALIA. (2017-2018). COURTESY OF THE ARTIST AND THE BERGGRUEN INSTITUTE.

**Nicolas Berggruen**

=====================

_Founder of the Berggruen Institute_

------------------------------------

**How did the institute get its start? What inspired you to spend your time and energy and money on these projects?**

It came about almost organically. As a teenager growing up in Paris, I was interested in philosophy and politics. And then I moved to America, to New York, where I took a long detour in business, but after a while I got interested again in what really captured me as a teenager—politics and philosophy. I pursued these subjects in Los Angeles, spending a lot of time with political theory and philosophy teachers at UCLA, because I wanted to learn. I didn’t think of creating an institute at first—I was just interested in the subjects. I started writing a book with Nathan Gardels, _Intelligent Governance for the 21st Century: A Middle Way Between West and East_, and we put together a little group of thinkers to help us contemplate these ideas.

So, starting with a personal interest and these initial collaborations, it progressed to feeling that, as opposed to just doing this work from a personal and almost selfish standpoint, why not go beyond and really engage others in a serious and a professional way? To learn from them, yes—but also to enable them to develop ideas and do something with those ideas. Communicate them or work to bring about reforms. The idea was to put the energy and the curiosity into the service of others by creating an institute.

**Can you outline the structure of the Institute, and of the primary initiatives that the institute is pursuing?**

Well, we have what we call “Great Transformations,” and there are sort of four legs to that. One is the future of democracy, secondis the future of capitalism, third is the future of geopolitics and governance—how we get the world to come together on at least a few things—and lastly, the future of the human species, who are we becoming and how we are changing as humans. The four legs are the foundation for all of our work. We do some of it in-house and then we enable a lot of work by collaborating with others, people who we think have something to say, something we can learn from; scholars or scientists or technologists or politicians.

**How would you describe the unified world that, ideally, the different initiatives of the Institution will lead to? What would that world look like?**

I think for anybody who does what we do, the goal is creating a better world for all. Better meaning: a world where people are fulfilled, where they have the chance to live a life of productivity and to experience the basic joys of living a life.

That requires political systems that enable all of this. These are the challenges we have at the institute. How do we make democracy work in the modern age? How do we make capitalism work for as many people as possible in a way that’s fair? How do we get the different cultures in the world that have very different roots and very different outlooks or traditions to cooperate? How do we think about, in a productive way, the opportunities and the challenges that will come with technology fundamentally transforming who we are? Literally transforming who we are as humans. We are trying to think about these problems with a very long view.

**What made you choose California, particularly Los Angeles, as a place to center your efforts and put down your own roots?**

I found Los Angeles to be a place with enormous freedom, and a lot of space—physical space, yes, but also mental space—to think and to develop ideas. It’s a very new place, with a limited past, so you haveto think in the other direction, meaning the future. It’s a space for imagination, with Hollywood and many other creative industries. It’s very diverse in every way possible, and non-judgmental. So it was kind of just a natural fit. And because I spent time here with professors at UCLA, one thing led to another.

**What role will the new facility in the Santa Monica Mountains play in the future of the Institute?**

The Institute today, and historically, has been able to function actually pretty well by having people work in-house, but then we’re lucky to have very good access to others at universities, and thinkers all around the world. We’ve been able to be quite productive without having a traditional symbolic home. But, I think that we’re lucky enough so that we can create something that is a center, and a physical center. Andtalk about luck, we’ve been able to buy an incredible piece of land in the middle of LA, in the Santa Monica Mountains next to the Getty Center—a very beautiful piece of land with very good access, incredible views, and very peaceful. You’re in the middle of the city, but you’re also removed, so it’s sort of the best of both worlds.

The idea is for it to be almost like a secular monastery, a place for peopleto think, to come together, to live and work and spend time, to collaborate or not, on ideas. But we’ll still maintain our model of working with talent wherever they are, all over the world. The LA center is going to be the principal space, but we have also just opened up a center in Beijing with Peking University, one of the leading universities in China. It’s very exciting, so in some ways we will have two main centers, one in America, one in China. Two opposites in terms of culture and politics, and that’s on purpose.

**What makes philosophical, rigorous thinking so important today? Why do you think a philosophical approach is still relevant to contemporary issues?**

I feel strongly that ideas shape who we are. At the end of the day, our culture is in many ways still operating on the set of ideas that were developed in the West 2,000 years ago, and any changes that happened over time came mostly from thinkers who took those fundamental ideas and developed them more. The Western focus on the strength of the individual that is so central to our culture, I think that came from our religion, from the early Greek philosophers, and then especially from the Enlightenment that gave so much power to the individual and to individual voices. This all came from philosophers, and in a world today that is transforming so rapidly and facing challenges from that rapid change, I think we need thinkers who can think in depth and on long timescales more than ever. And that’s why we created the philosophy prize, to highlight these thinkers and to demonstrate that what they are doing is valuable.

**It seems like one of the greatest challenges to improving governance right now is the slide into populism and demagoguery we’ve seen in the West. How is the institute taking strides to fight those things or to resolve them? Are you hopeful for the outcome?**

I think that you have to be hopeful if you’re doing the kind of thing we do. But it is a very volatile, challenging time. The world right nowis more and more fragmented. Within countries people are highly divided, especially in the West, and between countries people are competing economically, ideologically, culturally, and politically as well. So I think we’re getting further away as opposed to getting closer at the moment.

But I think people are starting to realize, especially in democracies, that there is something wrong with the system, and I think it will push usto reform or to think about reforms. We hope to help ensure that the process of reform takes a long view, and leads to better outcomes.

**How does the Transformations of the Human program fit into the Institute’s broader interests?**

These technologies, mostly AI and gene editing, really allow us, in my mind, to sort of take off. We can alter who we are as a species, and create new species. AI agents will already be better than us in many areas. The question is, how will we relate to it? How will it relate to us? Will it be part of us? Will it be separate from us, an entirely new species? Will it feel a kinship or loyalty towards its creator, its parents, or the opposite? Like a Greek tragedy, will it want to kill us? So, these are the obvious questions, the kind of things that I think we very much need to, as humans today, ask ourselves.

Like with every technology, you have the potential rewards and the potential risks, but with AI or gene editing it’s on a much larger scale. And then the implications on the philosophical side are enormous. So, intellectually, it’s incredibly interesting. These developments have the potential to radically change the way human societies and governments work, along with everything else. And many countries are eager to be the first to exploit these technologies. We want to make sure we’re thinking about these things in advance, and that we have talented individuals preparing for these changes.

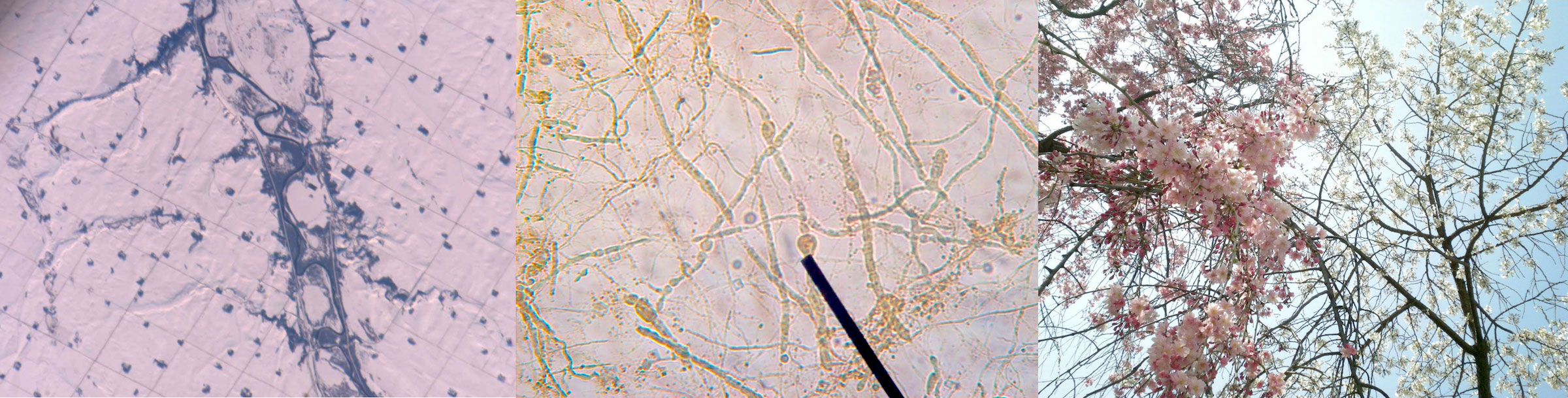

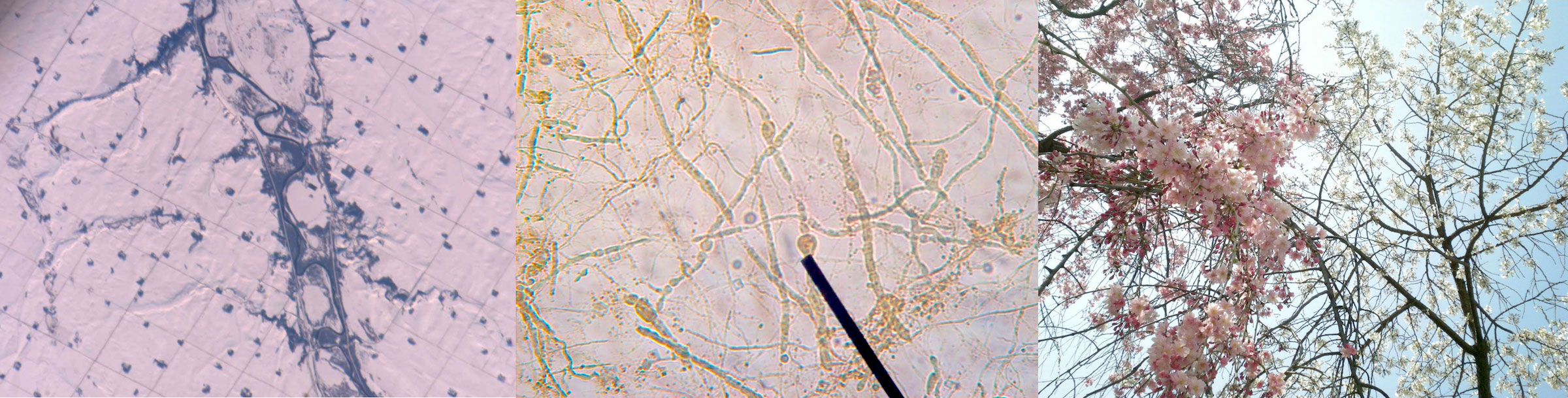

MARA EAGLE. “AERIAL HISTORY - CANBERRA IN THE 50S, 60S AND 70S; MICRO POROSITY MEASUREMENTS; UNKNOWN” FROM THE SERIES ATLAS OF MAGNILOQUENT DIGITALIA.

**Dawn Nakagawa**

=================

_Executive Vice President and Co-Director of the Future of Democracy Program_

-----------------------------------------------------------------------------

Y**ou co-direct the Future of Democracy program, and you oversee a subset of that program concerned with “Renovating Democracy for the Digital Age.” How did these programs develop?**

Two years ago, we launched the Renovating Democracy for the Digital Age project. The point of that work was really to look at how technology was presenting both challenges and new opportunities for democratic institutions. What we ended up spending a lot of time on, and trying to convince policy makers of, was the fact that social media and the way people were absorbing information and this sort of massive transformation of the information ecosystem was going to have a really big impact on democracy.

Nobody was listening to us at the time. It was fall of 2016, before the election. We were talking to people and they were like..._really_? They weren’t that interested. And these were heads of countries. We were telling them, Yes, this is going to be an issue. It’s already happening. There is a decline in political discourse, we’re becoming more polarized.

The echo-chamber effect, the extreme views that get a lot of play because they go viral, the misinformation, how Twitter has become basically a big verbal slugfest... we were showing them all of this, and we were trying to explain to them that we thought all of those things are going to have a big impact, and are effecting the health of our democracy. Anyway, 2016 happened, Trump’s on Twitter, the Cambridge Analytica and Facebook scandal erupts, and suddenly people are taking our calls. So it was in parallel with the Future of Democracy and the Great Transformations articulation of our mission that this work was underway, and it was clear that that work would fit into what we would call “Future of Democracy.”

**Has there been an effort to adapt or strengthen our institutions in light of these revelations, and the mainstream acceptance of the role of digital media in democracy?**

The conversation around social media has come a long way. There area lot of people doing good work on it. We are more interested in, not so much the specific things that are happening on social media, but how people are absorbing information and how their social expectations have changed, and what that means. What the implications are for political systems or politics in general. The other big question is, how do we reform democratic institutions to adapt to this new era of information and communication? Because it’s here, and it’s not going away. How do we push policy makers towards that?

My personal belief is that we just don’t have the right institutional infrastructure for the time we’re living in. Going back to the Magna Carta, every time we see a power transition in a society, new institutional infrastructures are developed. Well, power has shifted dramatically in the last 30 years. This is a massive evolution. Hierarchies do not work anymore, but we are still living under the institutional infrastructure of those hierarchies, and as much as we try to adapt to them, it’s not working. Humans don’t change, not without a massive crisis. Given Trump, and the Supreme Court, and the absolute outrage on the left, I think we might be headed for one.

**How do you move from thinking about these things to enacting concrete change?**

Concrete change—that is the long-term hope. First we have to understand the dynamics of power in a networked society. What are the sources of legitimacy and credibility? How can you create structures that engender trust from constituents? The first step is understanding those types of things, the first principles. Once we understand that, we can start to figure out how we design new institutions to reflect those things so that people do trust them.

I think the next step would be, if we think there are right answers, what are ways that we could move in that direction? Maybe it’s baby steps, doing a pilot project somewhere. Presumably and hopefully, the product of this is both an understanding of how society has shifted, and what the principles of trust are going to be in the 21st century as we continue to evolve down this trajectory. And meanwhile, those solutions need to preserve our democratic values.

**Can you provide an example of someone, or maybe a country or an organization, that has made strides in creating this sense of trust between citizens and leaders?**

Look at the Five Star Movement in Italy. It’s a crazy platform, but what they are trying to do is avoid elite capture, or the perception of elite capture. It was started by a popular comedian and a web strategist. They advocate for electronic voting, and sort of crowdsource legislative ideas. Those things bubble up and policy makers take them on. Representatives don’t actually hold a seat, the person who occupies the seat will change based on their expertise. The same thing with the Pirate Party and liquid democracy—they are changing the dynamics of representation so that nobody holds the seat for a very long time, and power is transferred based on some sort of meritocratic principle. Their specific policies and whether or not they’re going to be able to do this successfully long term are less of interest than the innovations they’re making within a democratic framework. It is a different kind of representative democracy. They’re doing it because it is more trustworthy: everybody gets a turn or a say, and everyone gets to participate in some way.

A lot of it has to do with our own expectations changing. What do I trust these days? I am not sure, but when I go online to check out a new restaurant, the first thing I want to see is the Yelp review. Theseare signals of legitimacy. I don’t know what the Yelp review versionof democracy is, but what we’re trying to do is looking at how society has changed and then what those innovators are doing and sort of developing new ideas. You know, jury duty for legislation for example. Give a large group of people a chance to go really deep on one piece of legislation and have those people decide. As long as they are more or less representative of the country, why not do it that way? Rather than leave it to people who have been seated in power who we can’t seem to get rid of because of gerrymandering and so on. So there are ideas out there. I am not pretending at this point that I know what the right ideas are, but we want to understand the principles and dynamics that power legitimacy and how to change and design institutions around that.

**Is there a feeling in your work or in the conversations that you are having that it just doesn’t really make sense to have just one form of government for 300 million people, or that power should be even less centralized than it is to be effective?**

That is a good question. We are in the very early days and what I expect is that as this evolves, things like thinking about the structure of how power moves, it is very likely that just because of the dynamics of legitimacy, and being that proximity is legitimacy, city governments are seen as more legitimate. Therefore, power should be devolved more in the cities, then you could end up with a very diverse, more mosaic of democratic systems. So there could be different kinds of democracy and different levels of democracy. Some might opt for things that with a lot more direct democracy involved and a city could choose how they want to govern and organize themselves. But in order for that to happen, the national government has to become a lot less powerful and that is not going to happen easily.

**How can we trust the results of the democratic process when it feels like voters are not receiving good information, or that they don’t really have the time or the interest to fully inform themselves, or they are swayed by populism or entertainment value instead of ability and platform?**

That is a key question. Have you ever read Neil Postman’s books? The first two chapters talk about how America was a hyper-literary society when it started. The people came over on the Mayflower and they brought more books with them than anything else. It talks about the medium of text and what it forces you to do, how it forces you to think, and the neurological development and composition of a person that develops if that is your main medium for ingesting information. Now we have TV, Twitter, Facebook, hyper-partisan blogs—what kind of person or citizen does that create?

We started with this idea, if more and more people are getting their information from social media, and social media operates according to these rules, that is going to have a huge impact, and we have to think about that. As we got further, the question became, Can you have a democracy with a completely broken and fractured media system? Where you don’t know what you can trust, people don’t trust most of it, and they basically vibrate towards what they want to hear? Can you actually have a democracy where there is no shared narrative?

That still remains a key question in this project. My sense is that there are ideas out there that do allow you to preserve democratic values as long as people trust the system. Let’s say you don’t know anything about xyz issue—that is fine. You don’t need to make a decision, but if you know that there is a system out here whereby it is not corporations who are defining what policy is based on self-interested positions, but a randomly selected group of people who are going to be given the opportunity to really study the issue, and then come back with some recommendations, and those recommendations would go into what the law is. It doesn’t mean it is going to be perfect, but if you trust the process at least not to be corrupted, there are ways to preserve democracy and give people voice in a way that seems equitable but doesn’t necessarily have the shortcomings of our current system. I do believe there are innovations out there that can strike that balance while being good governments.

**Does artificial intelligence have a role in the future of governance?**

Our tolerance for human error is going to become lower and lower. Particularly as AI becomes more widespread. Every chance we get, we are replacing humans with technology because we think they are going to have better results and fewer mistakes. For the most part, that’s right. And as we move into a society were that is more and more the case, it is going to seem unethical if we know that the other alternative is—well, you put a human at the wheel, he falls asleep, people die. It seems like an ethical imperative. Similarly, I think at some point we will be dealing with parts of our government that become very technocratic and very much run by AI systems—this is already happening in our criminal justice and economic systems. We are not that far away from a campaign to vote an AI into a representative seat. It will start as a game, but this AI system will be so great that people will be like, ‘Why don’t we have this?’ It doesn’t break down, it doesn’t have affairs, it doesn’t fail us. It is not human, and that is great. This is where the questions we’re pursuing in the Future of Democracy program dovetail with the Transformations of the Human program.

MARA EAGLE. “ASTRONAUT PHOTO, USA - MINNESOTA ROADS; BLOGBERRY EMRY - PRAKTIKUM PARASIT; WELCOME TO WALLPAPERS DATABASE” FROM THE SERIES ATLAS OF MAGNILOQUENT DIGITALIA. (2017-2018). COURTESY OF THE ARTIST AND THE BERGGRUEN INSTITUTE

Tobias Rees

===========

_Director of the Transformations of the Human Program_

------------------------------------------------------

**How would you summarize the ambitions of the Transformations of the Human program?**

The idea of the “Transformations of the Human” program is exquisitely simple but really radical. The idea is that the vocabulary that we have available to describe ourselves as humans increasingly fails us. If I would say, “Write up a list of all the concepts you have available to describe what it really means to be human,” most people say culture, social, politics, civilization, history. You know that none of these words really had their modern meaning before the 1750’s? How crazy is that? We have a whole framework that is not valid for what was before, because people thought differently.

When you go to university, there’s a faculty of arts and there’s a faculty of science, and the idea is that the sciences are concerned with nature and everything that’s reducible to math and mechanics, while the Humanities insist that the human is more than mere machine—we make art, poetry, music—and cannot be described with a purely mathematical or biological vocabulary. So the question is: how could you actually think diagonally, without subjecting the humanities to the restrictions of the natural sciences or the natural sciences to the restrictions of the humanities, and without losing anything of both? For this we need a new vocabulary, as our current vocabulary fails us.

**What exactly do you mean when you say “our vocabulary fails us?” What would a new vocabulary look like, and how would it apply to these fields of study?**

Let’s take AI. AI is much more than an engineering discipline—it’s a philosophical project, it’s a social experiment, it has artistic qualities. How can you write a curriculum that reflects this? I love the provocation of countering the assumption that we are more than just machines because we have reason and we think. Maybe, maybe not. Suddenly

we have machine intelligence, we have human intelligence, we have olfactory intelligence in dogs, which is much better than ours. So the Descartes dichotomy of a “thinking thing” or a “mere thing” breaks down. Now we understand that the human is only one entry in a series that exceeds the human dramatically. The human is decentered, which I enjoy. I can do this with climate change. 350 million years from now, you could look at an ice core and see the effects of our civilization, or in the soil record. A time scale opens up where you can describe humans in a language, there is a geochemical language that was never invented to describe humans, but you enroll them in the story of the earth that is not about the human but which humans are now a part.

These displacements are all examples for how our vocabulary fails us. Take politics. If you read Hobbes, there is politics on one hand, and on the other there is a state of nature where man is a beast to his fellow man. Nature and politics are opposed. But now we want to be able to describe politics in relation to nature. We cannot afford that distinction, but the vocabulary is currently in opposition.

So, the idea is to build a program that revolves around all those instances where our vocabulary fails, and to focus on these failures as instances of freedom and invitations to imagine the world radically differently time and time again. The way AI fails and the way the microbiome fails our vocabulary is different, which is a form of freedom, actually. There’s no one-size fits all approach. We have one venue called “AI and the Human” and we have one venue called “Biotech and the Human,” which is about the microbiome and synthetic biology, along with many collaborations between artists and the anthropology, philosophy, and AI fellows. What I try to do here is to find artists, philosophers and anthropologists who are eager to think beyond these categories that Universities are built on and produce books, exhibits, pamphlets and more. To be as radical as we can be. Joyful survival.

**What’s an example of how art plays into the program?**

We have a movie project called “Future Humans Video Archive” where we ask 250 people “What will future humans be like and what will their bodies be made up of? Where will they live?” And then we build a webpage with 2 minute clips. The first woman I interviewed, I asked her, “What will future humans be like?” She goes, “Humans? I’m much more interested in horses. We’ve come such a long way in understanding they’re better. Better! Not just different, but better. I only begin to think like a horse.”

It was so wild, because I had laid out a script that said, “The future is about humans.” She said “Screw you, it’s all about horses!” I loved how she claimed her own future. We have a series of such projects. In the past, when I organized two big workshops where famous AI engineers and famous philosophers got together, it was a colossal failure. There was no conversation. The idea now is that these researchers are paired with artists and philosophers or anthropologists, and we tell them that their job is not to prove the philosopher or anthropologist wrong. Your job is to be a member of the group every day, and whenever you find an instance that shows that AI is not just an engineering discipline but a philosophical or artistic project, capture it and make it available to the others. Enroll them in a discussion and try to foster a concept of AI that is bigger than the engineering concept that we usually have.

**This makes me think of what our culture would look like if, as many of these researchers suggest, human free will is an illusion, and that fact is understood broadly by the public and by the humanities. What would** _Hamlet_ **without free will look like?**

There is this book by Stephen Greenblatt that basically makes the claim that in English, our modern vocabulary to experience ourselves as subjects with inner lives was literally invented from scratch by Shakespeare. It was a very convincing argument and, of course, most people took this as the one-time breakthrough to the truth. What if this definition marks one historical epoch that is now coming to an end? We should still read Shakespeare of course, but where is the new Shakespeare? What would that new vocabulary look like? The universities are not currently exploring such ideas.

**What about human capacities beyond raw computational intelligence? Is there an effort to make emotional machines, for instance?**

Antonio Demasio at USC has this new book called _The Strange Order of Things_. For him, rational intelligence is integrated in the form of emotional intelligence, and he makes a distinction between feelings and emotions. Bacteria have emotions of a kind, perhaps, as they receive stimuli and react to survive, but feelings only occur when you’re self-aware of your emotions. So, you cannot have intelligence without having some form of emotional self-awareness

He says if you have a form of intelligence that doesn’t have that emotionality at all, that’s a really wild case for a psychiatrist. It’s a pathological form of intelligence in some way. So the question is: how do you create emotional machines? Humans receive a lot of information through olfactory stimuli, and we communicate non-verbally with pheromones. How do you make a machine that might have those dimensions of communication? This is a really cool area, because it’s not just engineering—you need the input of an artist perhaps, someone who studies emotions and language, a bacteriologist, and the venture capitalists. It gets blurred to such a degree that you cannot say: this is capitalism, this is science, this is art. I love these blurrings.

**How would you answer someone who says, this is all nice to think about, but what are the practical applications? How does this tie into the reform work, or to the other initiatives at the institute?**

One thing that Nicolas Berggruen is very cool about, when we had those conversations about transformations of the human, alongside planning for the project on the future of democracy and the future of capitalism, he said, “Well, a lot of projects have to actually emerge from transformations of the human, because what does it help if we discuss democracy in the old terms, complaining that the institutions no longer work? We must think from the perspective of the transformations that things like AI or gene editing or synthetic biology bring about, in order to come up with really new concepts of capitalism or democracy.”

These are things that I hope will really come out of our program, including policy recommendations for today. That if you want to talk about politics, you have to include microbes, and you have to include machines. If you would have said something like this 15 years ago, you would have to be declared to be crazy. I hope we can, within a reasonable time, build the hilltop into a space, because ideas are not real if they don’t have sort of an infrastructure basis. So to have the institute as such is already cool, and to be at the Bradbury is very cool, but to have a hilltop as a place that is bigger, where we have spaces and houses for these events, where philosophy, arts, science, and engineering can meet, that will be wonderful.

* * *

1 “Mara Eagle, a Montreal based artist, and Tobias Rees, Director of the Transformations of the Human Program, have been conversing and collaborating for many years. They are both intrigued by instances in the here and now that exceed, that escape, the established vocabulary we have available to think ourselves as humans. Their most recent joint venture revolves around Eagle’s Atlas project: an effort to let algorithms render visible unexpected correspondences between disparate, unrelated photos of everyday life found on the internet. It is an atlas of random, meaningless, correspondences produced by AI, curated by humans; it is a form of art as research.” —Tobias Rees

_Artist Statement:_ The Atlas of Magniloquent Digitalia is an in-progress project intended for exhibition as a series of 99 framed photographic works, and for publication as an artist book. To create these triptychs I use content-based-image-retrieval (CBIR) search engines, like Google’s search-by-image. The titles are sourced from the original context of each image, though many have become garbled by Google’s automatic online translator. CBIR is one of the fastest growing fields in computer science focused on the development of computer vision, pattern recognition and machine-learning algorithms. While it is usually used to search and identify, this project harnesses the creative potential of computer vision to confuse images and reveal arbitrary correspondences in form.

* * *

Written by Sid Feddema

Founded in 2010 by Berggruen himself, along with co-founder Nathan Gardels, who runs the Institution’s 21st Century Council, and currently centered in downtown LA’s famed Bradbury Building (whose fantastical skylit atrium was fittingly used as a set in _Bladerunner_), the Berggruen Institute has already notched some major achievements. They’ve published books and made policy recommendations for the EU and the state of California. They’ve set up partnerships between elite American and Chinese universities. Fostered dialogue between nations. Started the _World Post_ as a collaboration with the _Huffington Post_ before joining the _Washington Post_ to provide a platform for substantive analysis, opinion, and more globally-focused ideas coverage. But they’re only getting started. In recognition of the five-alarm fires on the horizon and the radical societal shifts currently wracking the global citizenry, the Institute is sharpening its focus and concentrating their efforts on four “Great Transformations,” which include the Future of Democracy, Transformations of the Human, Globalization and Geopolitics, and the Future of Capitalism.

We spoke with three key figures out of many remarkable people at the Berggruen Institute (Nils Gilman, Vice President of Programs, who solidified the aforementioned program areas, and Bing Song, Director of the Berggruen Institute’s ChinaCenter, which plays an important role in the global vision of the Institute, both deserve a mention) to discuss the state of these fields and possible paths forward, as well as the future of the institute as a whole: Nicolas Berggruen, founder of the Institute; Dawn Nakagawa, Executive Vice President and co-director of the Future of Democracy program area, which oversees the Renovating Democracy for the Digital Age project; and Tobias Rees, Director of the Transformations of the Human Program. We present these conversations alongside renderings of the planned new headquarters as well as artwork from Mara Eagle \[see below\], who is working with the institute as part of the Transformations of the Human program to explore the intersections of artistic practice and developments in machine learning and artificial intelligence.

Founded in 2010 by Berggruen himself, along with co-founder Nathan Gardels, who runs the Institution’s 21st Century Council, and currently centered in downtown LA’s famed Bradbury Building (whose fantastical skylit atrium was fittingly used as a set in _Bladerunner_), the Berggruen Institute has already notched some major achievements. They’ve published books and made policy recommendations for the EU and the state of California. They’ve set up partnerships between elite American and Chinese universities. Fostered dialogue between nations. Started the _World Post_ as a collaboration with the _Huffington Post_ before joining the _Washington Post_ to provide a platform for substantive analysis, opinion, and more globally-focused ideas coverage. But they’re only getting started. In recognition of the five-alarm fires on the horizon and the radical societal shifts currently wracking the global citizenry, the Institute is sharpening its focus and concentrating their efforts on four “Great Transformations,” which include the Future of Democracy, Transformations of the Human, Globalization and Geopolitics, and the Future of Capitalism.

We spoke with three key figures out of many remarkable people at the Berggruen Institute (Nils Gilman, Vice President of Programs, who solidified the aforementioned program areas, and Bing Song, Director of the Berggruen Institute’s ChinaCenter, which plays an important role in the global vision of the Institute, both deserve a mention) to discuss the state of these fields and possible paths forward, as well as the future of the institute as a whole: Nicolas Berggruen, founder of the Institute; Dawn Nakagawa, Executive Vice President and co-director of the Future of Democracy program area, which oversees the Renovating Democracy for the Digital Age project; and Tobias Rees, Director of the Transformations of the Human Program. We present these conversations alongside renderings of the planned new headquarters as well as artwork from Mara Eagle \[see below\], who is working with the institute as part of the Transformations of the Human program to explore the intersections of artistic practice and developments in machine learning and artificial intelligence.

![MARA EAGLE. “IF YOU CAN NOT AVOID, ENJOY THE CHARM OF RAIN CAMPING!; THIS WETLAND IN CALIFORNIA IS HABITAT FOR MIGRATING SNOW GEESE; TUCUMÁN (SPANISH PRONUNCIATION: \[TUKUˈMAN\])” FROM THE SERIES ATLAS OF MAGNILOQUENT DIGITALIA. (2017-2018). COURTESY…](https://assets-global.website-files.com/62ee0bbe0c783a903ecc0ddb/6472b29db3184efb1db4e41c_FLAUNT-MAGAZINE-BREGRUENN-INSTITUTE-4.jpeg) ![MARA EAGLE. “IF YOU CAN NOT AVOID, ENJOY THE CHARM OF RAIN CAMPING!; THIS WETLAND IN CALIFORNIA IS HABITAT FOR MIGRATING SNOW GEESE; TUCUMÁN (SPANISH PRONUNCIATION: [TUKUˈMAN])” FROM THE SERIES ATLAS OF MAGNILOQUENT DIGITALIA. (2017-2018). COURTESY…](https://assets-global.website-files.com/62ee0bbe0c783a903ecc0ddb/6472b29db3184efb1db4e41c_FLAUNT-MAGAZINE-BREGRUENN-INSTITUTE-4.jpeg)

MARA EAGLE. “IF YOU CAN NOT AVOID, ENJOY THE CHARM OF RAIN CAMPING!; THIS WETLAND IN CALIFORNIA IS HABITAT FOR MIGRATING SNOW GEESE; TUCUMÁN (SPANISH PRONUNCIATION: \[TUKUˈMAN\])” FROM THE SERIES ATLAS OF MAGNILOQUENT DIGITALIA. (2017-2018). COURTESY OF THE ARTIST AND THE BERGGRUEN INSTITUTE.

**Nicolas Berggruen**

=====================

_Founder of the Berggruen Institute_

------------------------------------

**How did the institute get its start? What inspired you to spend your time and energy and money on these projects?**

It came about almost organically. As a teenager growing up in Paris, I was interested in philosophy and politics. And then I moved to America, to New York, where I took a long detour in business, but after a while I got interested again in what really captured me as a teenager—politics and philosophy. I pursued these subjects in Los Angeles, spending a lot of time with political theory and philosophy teachers at UCLA, because I wanted to learn. I didn’t think of creating an institute at first—I was just interested in the subjects. I started writing a book with Nathan Gardels, _Intelligent Governance for the 21st Century: A Middle Way Between West and East_, and we put together a little group of thinkers to help us contemplate these ideas.

So, starting with a personal interest and these initial collaborations, it progressed to feeling that, as opposed to just doing this work from a personal and almost selfish standpoint, why not go beyond and really engage others in a serious and a professional way? To learn from them, yes—but also to enable them to develop ideas and do something with those ideas. Communicate them or work to bring about reforms. The idea was to put the energy and the curiosity into the service of others by creating an institute.

**Can you outline the structure of the Institute, and of the primary initiatives that the institute is pursuing?**

Well, we have what we call “Great Transformations,” and there are sort of four legs to that. One is the future of democracy, secondis the future of capitalism, third is the future of geopolitics and governance—how we get the world to come together on at least a few things—and lastly, the future of the human species, who are we becoming and how we are changing as humans. The four legs are the foundation for all of our work. We do some of it in-house and then we enable a lot of work by collaborating with others, people who we think have something to say, something we can learn from; scholars or scientists or technologists or politicians.

**How would you describe the unified world that, ideally, the different initiatives of the Institution will lead to? What would that world look like?**

I think for anybody who does what we do, the goal is creating a better world for all. Better meaning: a world where people are fulfilled, where they have the chance to live a life of productivity and to experience the basic joys of living a life.

That requires political systems that enable all of this. These are the challenges we have at the institute. How do we make democracy work in the modern age? How do we make capitalism work for as many people as possible in a way that’s fair? How do we get the different cultures in the world that have very different roots and very different outlooks or traditions to cooperate? How do we think about, in a productive way, the opportunities and the challenges that will come with technology fundamentally transforming who we are? Literally transforming who we are as humans. We are trying to think about these problems with a very long view.

**What made you choose California, particularly Los Angeles, as a place to center your efforts and put down your own roots?**

I found Los Angeles to be a place with enormous freedom, and a lot of space—physical space, yes, but also mental space—to think and to develop ideas. It’s a very new place, with a limited past, so you haveto think in the other direction, meaning the future. It’s a space for imagination, with Hollywood and many other creative industries. It’s very diverse in every way possible, and non-judgmental. So it was kind of just a natural fit. And because I spent time here with professors at UCLA, one thing led to another.

**What role will the new facility in the Santa Monica Mountains play in the future of the Institute?**

The Institute today, and historically, has been able to function actually pretty well by having people work in-house, but then we’re lucky to have very good access to others at universities, and thinkers all around the world. We’ve been able to be quite productive without having a traditional symbolic home. But, I think that we’re lucky enough so that we can create something that is a center, and a physical center. Andtalk about luck, we’ve been able to buy an incredible piece of land in the middle of LA, in the Santa Monica Mountains next to the Getty Center—a very beautiful piece of land with very good access, incredible views, and very peaceful. You’re in the middle of the city, but you’re also removed, so it’s sort of the best of both worlds.

The idea is for it to be almost like a secular monastery, a place for peopleto think, to come together, to live and work and spend time, to collaborate or not, on ideas. But we’ll still maintain our model of working with talent wherever they are, all over the world. The LA center is going to be the principal space, but we have also just opened up a center in Beijing with Peking University, one of the leading universities in China. It’s very exciting, so in some ways we will have two main centers, one in America, one in China. Two opposites in terms of culture and politics, and that’s on purpose.

**What makes philosophical, rigorous thinking so important today? Why do you think a philosophical approach is still relevant to contemporary issues?**

I feel strongly that ideas shape who we are. At the end of the day, our culture is in many ways still operating on the set of ideas that were developed in the West 2,000 years ago, and any changes that happened over time came mostly from thinkers who took those fundamental ideas and developed them more. The Western focus on the strength of the individual that is so central to our culture, I think that came from our religion, from the early Greek philosophers, and then especially from the Enlightenment that gave so much power to the individual and to individual voices. This all came from philosophers, and in a world today that is transforming so rapidly and facing challenges from that rapid change, I think we need thinkers who can think in depth and on long timescales more than ever. And that’s why we created the philosophy prize, to highlight these thinkers and to demonstrate that what they are doing is valuable.

**It seems like one of the greatest challenges to improving governance right now is the slide into populism and demagoguery we’ve seen in the West. How is the institute taking strides to fight those things or to resolve them? Are you hopeful for the outcome?**

I think that you have to be hopeful if you’re doing the kind of thing we do. But it is a very volatile, challenging time. The world right nowis more and more fragmented. Within countries people are highly divided, especially in the West, and between countries people are competing economically, ideologically, culturally, and politically as well. So I think we’re getting further away as opposed to getting closer at the moment.

But I think people are starting to realize, especially in democracies, that there is something wrong with the system, and I think it will push usto reform or to think about reforms. We hope to help ensure that the process of reform takes a long view, and leads to better outcomes.

**How does the Transformations of the Human program fit into the Institute’s broader interests?**

These technologies, mostly AI and gene editing, really allow us, in my mind, to sort of take off. We can alter who we are as a species, and create new species. AI agents will already be better than us in many areas. The question is, how will we relate to it? How will it relate to us? Will it be part of us? Will it be separate from us, an entirely new species? Will it feel a kinship or loyalty towards its creator, its parents, or the opposite? Like a Greek tragedy, will it want to kill us? So, these are the obvious questions, the kind of things that I think we very much need to, as humans today, ask ourselves.

Like with every technology, you have the potential rewards and the potential risks, but with AI or gene editing it’s on a much larger scale. And then the implications on the philosophical side are enormous. So, intellectually, it’s incredibly interesting. These developments have the potential to radically change the way human societies and governments work, along with everything else. And many countries are eager to be the first to exploit these technologies. We want to make sure we’re thinking about these things in advance, and that we have talented individuals preparing for these changes.

![MARA EAGLE. “IF YOU CAN NOT AVOID, ENJOY THE CHARM OF RAIN CAMPING!; THIS WETLAND IN CALIFORNIA IS HABITAT FOR MIGRATING SNOW GEESE; TUCUMÁN (SPANISH PRONUNCIATION: [TUKUˈMAN])” FROM THE SERIES ATLAS OF MAGNILOQUENT DIGITALIA. (2017-2018). COURTESY…](https://assets-global.website-files.com/62ee0bbe0c783a903ecc0ddb/6472b29db3184efb1db4e41c_FLAUNT-MAGAZINE-BREGRUENN-INSTITUTE-4.jpeg)

MARA EAGLE. “IF YOU CAN NOT AVOID, ENJOY THE CHARM OF RAIN CAMPING!; THIS WETLAND IN CALIFORNIA IS HABITAT FOR MIGRATING SNOW GEESE; TUCUMÁN (SPANISH PRONUNCIATION: \[TUKUˈMAN\])” FROM THE SERIES ATLAS OF MAGNILOQUENT DIGITALIA. (2017-2018). COURTESY OF THE ARTIST AND THE BERGGRUEN INSTITUTE.

**Nicolas Berggruen**

=====================

_Founder of the Berggruen Institute_

------------------------------------

**How did the institute get its start? What inspired you to spend your time and energy and money on these projects?**

It came about almost organically. As a teenager growing up in Paris, I was interested in philosophy and politics. And then I moved to America, to New York, where I took a long detour in business, but after a while I got interested again in what really captured me as a teenager—politics and philosophy. I pursued these subjects in Los Angeles, spending a lot of time with political theory and philosophy teachers at UCLA, because I wanted to learn. I didn’t think of creating an institute at first—I was just interested in the subjects. I started writing a book with Nathan Gardels, _Intelligent Governance for the 21st Century: A Middle Way Between West and East_, and we put together a little group of thinkers to help us contemplate these ideas.

So, starting with a personal interest and these initial collaborations, it progressed to feeling that, as opposed to just doing this work from a personal and almost selfish standpoint, why not go beyond and really engage others in a serious and a professional way? To learn from them, yes—but also to enable them to develop ideas and do something with those ideas. Communicate them or work to bring about reforms. The idea was to put the energy and the curiosity into the service of others by creating an institute.

**Can you outline the structure of the Institute, and of the primary initiatives that the institute is pursuing?**

Well, we have what we call “Great Transformations,” and there are sort of four legs to that. One is the future of democracy, secondis the future of capitalism, third is the future of geopolitics and governance—how we get the world to come together on at least a few things—and lastly, the future of the human species, who are we becoming and how we are changing as humans. The four legs are the foundation for all of our work. We do some of it in-house and then we enable a lot of work by collaborating with others, people who we think have something to say, something we can learn from; scholars or scientists or technologists or politicians.

**How would you describe the unified world that, ideally, the different initiatives of the Institution will lead to? What would that world look like?**

I think for anybody who does what we do, the goal is creating a better world for all. Better meaning: a world where people are fulfilled, where they have the chance to live a life of productivity and to experience the basic joys of living a life.

That requires political systems that enable all of this. These are the challenges we have at the institute. How do we make democracy work in the modern age? How do we make capitalism work for as many people as possible in a way that’s fair? How do we get the different cultures in the world that have very different roots and very different outlooks or traditions to cooperate? How do we think about, in a productive way, the opportunities and the challenges that will come with technology fundamentally transforming who we are? Literally transforming who we are as humans. We are trying to think about these problems with a very long view.

**What made you choose California, particularly Los Angeles, as a place to center your efforts and put down your own roots?**

I found Los Angeles to be a place with enormous freedom, and a lot of space—physical space, yes, but also mental space—to think and to develop ideas. It’s a very new place, with a limited past, so you haveto think in the other direction, meaning the future. It’s a space for imagination, with Hollywood and many other creative industries. It’s very diverse in every way possible, and non-judgmental. So it was kind of just a natural fit. And because I spent time here with professors at UCLA, one thing led to another.

**What role will the new facility in the Santa Monica Mountains play in the future of the Institute?**

The Institute today, and historically, has been able to function actually pretty well by having people work in-house, but then we’re lucky to have very good access to others at universities, and thinkers all around the world. We’ve been able to be quite productive without having a traditional symbolic home. But, I think that we’re lucky enough so that we can create something that is a center, and a physical center. Andtalk about luck, we’ve been able to buy an incredible piece of land in the middle of LA, in the Santa Monica Mountains next to the Getty Center—a very beautiful piece of land with very good access, incredible views, and very peaceful. You’re in the middle of the city, but you’re also removed, so it’s sort of the best of both worlds.

The idea is for it to be almost like a secular monastery, a place for peopleto think, to come together, to live and work and spend time, to collaborate or not, on ideas. But we’ll still maintain our model of working with talent wherever they are, all over the world. The LA center is going to be the principal space, but we have also just opened up a center in Beijing with Peking University, one of the leading universities in China. It’s very exciting, so in some ways we will have two main centers, one in America, one in China. Two opposites in terms of culture and politics, and that’s on purpose.

**What makes philosophical, rigorous thinking so important today? Why do you think a philosophical approach is still relevant to contemporary issues?**

I feel strongly that ideas shape who we are. At the end of the day, our culture is in many ways still operating on the set of ideas that were developed in the West 2,000 years ago, and any changes that happened over time came mostly from thinkers who took those fundamental ideas and developed them more. The Western focus on the strength of the individual that is so central to our culture, I think that came from our religion, from the early Greek philosophers, and then especially from the Enlightenment that gave so much power to the individual and to individual voices. This all came from philosophers, and in a world today that is transforming so rapidly and facing challenges from that rapid change, I think we need thinkers who can think in depth and on long timescales more than ever. And that’s why we created the philosophy prize, to highlight these thinkers and to demonstrate that what they are doing is valuable.

**It seems like one of the greatest challenges to improving governance right now is the slide into populism and demagoguery we’ve seen in the West. How is the institute taking strides to fight those things or to resolve them? Are you hopeful for the outcome?**

I think that you have to be hopeful if you’re doing the kind of thing we do. But it is a very volatile, challenging time. The world right nowis more and more fragmented. Within countries people are highly divided, especially in the West, and between countries people are competing economically, ideologically, culturally, and politically as well. So I think we’re getting further away as opposed to getting closer at the moment.

But I think people are starting to realize, especially in democracies, that there is something wrong with the system, and I think it will push usto reform or to think about reforms. We hope to help ensure that the process of reform takes a long view, and leads to better outcomes.

**How does the Transformations of the Human program fit into the Institute’s broader interests?**

These technologies, mostly AI and gene editing, really allow us, in my mind, to sort of take off. We can alter who we are as a species, and create new species. AI agents will already be better than us in many areas. The question is, how will we relate to it? How will it relate to us? Will it be part of us? Will it be separate from us, an entirely new species? Will it feel a kinship or loyalty towards its creator, its parents, or the opposite? Like a Greek tragedy, will it want to kill us? So, these are the obvious questions, the kind of things that I think we very much need to, as humans today, ask ourselves.

Like with every technology, you have the potential rewards and the potential risks, but with AI or gene editing it’s on a much larger scale. And then the implications on the philosophical side are enormous. So, intellectually, it’s incredibly interesting. These developments have the potential to radically change the way human societies and governments work, along with everything else. And many countries are eager to be the first to exploit these technologies. We want to make sure we’re thinking about these things in advance, and that we have talented individuals preparing for these changes.

MARA EAGLE. “AERIAL HISTORY - CANBERRA IN THE 50S, 60S AND 70S; MICRO POROSITY MEASUREMENTS; UNKNOWN” FROM THE SERIES ATLAS OF MAGNILOQUENT DIGITALIA.

**Dawn Nakagawa**

=================

_Executive Vice President and Co-Director of the Future of Democracy Program_

-----------------------------------------------------------------------------

Y**ou co-direct the Future of Democracy program, and you oversee a subset of that program concerned with “Renovating Democracy for the Digital Age.” How did these programs develop?**

Two years ago, we launched the Renovating Democracy for the Digital Age project. The point of that work was really to look at how technology was presenting both challenges and new opportunities for democratic institutions. What we ended up spending a lot of time on, and trying to convince policy makers of, was the fact that social media and the way people were absorbing information and this sort of massive transformation of the information ecosystem was going to have a really big impact on democracy.

Nobody was listening to us at the time. It was fall of 2016, before the election. We were talking to people and they were like..._really_? They weren’t that interested. And these were heads of countries. We were telling them, Yes, this is going to be an issue. It’s already happening. There is a decline in political discourse, we’re becoming more polarized.

The echo-chamber effect, the extreme views that get a lot of play because they go viral, the misinformation, how Twitter has become basically a big verbal slugfest... we were showing them all of this, and we were trying to explain to them that we thought all of those things are going to have a big impact, and are effecting the health of our democracy. Anyway, 2016 happened, Trump’s on Twitter, the Cambridge Analytica and Facebook scandal erupts, and suddenly people are taking our calls. So it was in parallel with the Future of Democracy and the Great Transformations articulation of our mission that this work was underway, and it was clear that that work would fit into what we would call “Future of Democracy.”

**Has there been an effort to adapt or strengthen our institutions in light of these revelations, and the mainstream acceptance of the role of digital media in democracy?**

The conversation around social media has come a long way. There area lot of people doing good work on it. We are more interested in, not so much the specific things that are happening on social media, but how people are absorbing information and how their social expectations have changed, and what that means. What the implications are for political systems or politics in general. The other big question is, how do we reform democratic institutions to adapt to this new era of information and communication? Because it’s here, and it’s not going away. How do we push policy makers towards that?

My personal belief is that we just don’t have the right institutional infrastructure for the time we’re living in. Going back to the Magna Carta, every time we see a power transition in a society, new institutional infrastructures are developed. Well, power has shifted dramatically in the last 30 years. This is a massive evolution. Hierarchies do not work anymore, but we are still living under the institutional infrastructure of those hierarchies, and as much as we try to adapt to them, it’s not working. Humans don’t change, not without a massive crisis. Given Trump, and the Supreme Court, and the absolute outrage on the left, I think we might be headed for one.

**How do you move from thinking about these things to enacting concrete change?**

Concrete change—that is the long-term hope. First we have to understand the dynamics of power in a networked society. What are the sources of legitimacy and credibility? How can you create structures that engender trust from constituents? The first step is understanding those types of things, the first principles. Once we understand that, we can start to figure out how we design new institutions to reflect those things so that people do trust them.

I think the next step would be, if we think there are right answers, what are ways that we could move in that direction? Maybe it’s baby steps, doing a pilot project somewhere. Presumably and hopefully, the product of this is both an understanding of how society has shifted, and what the principles of trust are going to be in the 21st century as we continue to evolve down this trajectory. And meanwhile, those solutions need to preserve our democratic values.

**Can you provide an example of someone, or maybe a country or an organization, that has made strides in creating this sense of trust between citizens and leaders?**

Look at the Five Star Movement in Italy. It’s a crazy platform, but what they are trying to do is avoid elite capture, or the perception of elite capture. It was started by a popular comedian and a web strategist. They advocate for electronic voting, and sort of crowdsource legislative ideas. Those things bubble up and policy makers take them on. Representatives don’t actually hold a seat, the person who occupies the seat will change based on their expertise. The same thing with the Pirate Party and liquid democracy—they are changing the dynamics of representation so that nobody holds the seat for a very long time, and power is transferred based on some sort of meritocratic principle. Their specific policies and whether or not they’re going to be able to do this successfully long term are less of interest than the innovations they’re making within a democratic framework. It is a different kind of representative democracy. They’re doing it because it is more trustworthy: everybody gets a turn or a say, and everyone gets to participate in some way.

A lot of it has to do with our own expectations changing. What do I trust these days? I am not sure, but when I go online to check out a new restaurant, the first thing I want to see is the Yelp review. Theseare signals of legitimacy. I don’t know what the Yelp review versionof democracy is, but what we’re trying to do is looking at how society has changed and then what those innovators are doing and sort of developing new ideas. You know, jury duty for legislation for example. Give a large group of people a chance to go really deep on one piece of legislation and have those people decide. As long as they are more or less representative of the country, why not do it that way? Rather than leave it to people who have been seated in power who we can’t seem to get rid of because of gerrymandering and so on. So there are ideas out there. I am not pretending at this point that I know what the right ideas are, but we want to understand the principles and dynamics that power legitimacy and how to change and design institutions around that.

**Is there a feeling in your work or in the conversations that you are having that it just doesn’t really make sense to have just one form of government for 300 million people, or that power should be even less centralized than it is to be effective?**

That is a good question. We are in the very early days and what I expect is that as this evolves, things like thinking about the structure of how power moves, it is very likely that just because of the dynamics of legitimacy, and being that proximity is legitimacy, city governments are seen as more legitimate. Therefore, power should be devolved more in the cities, then you could end up with a very diverse, more mosaic of democratic systems. So there could be different kinds of democracy and different levels of democracy. Some might opt for things that with a lot more direct democracy involved and a city could choose how they want to govern and organize themselves. But in order for that to happen, the national government has to become a lot less powerful and that is not going to happen easily.

**How can we trust the results of the democratic process when it feels like voters are not receiving good information, or that they don’t really have the time or the interest to fully inform themselves, or they are swayed by populism or entertainment value instead of ability and platform?**

That is a key question. Have you ever read Neil Postman’s books? The first two chapters talk about how America was a hyper-literary society when it started. The people came over on the Mayflower and they brought more books with them than anything else. It talks about the medium of text and what it forces you to do, how it forces you to think, and the neurological development and composition of a person that develops if that is your main medium for ingesting information. Now we have TV, Twitter, Facebook, hyper-partisan blogs—what kind of person or citizen does that create?

We started with this idea, if more and more people are getting their information from social media, and social media operates according to these rules, that is going to have a huge impact, and we have to think about that. As we got further, the question became, Can you have a democracy with a completely broken and fractured media system? Where you don’t know what you can trust, people don’t trust most of it, and they basically vibrate towards what they want to hear? Can you actually have a democracy where there is no shared narrative?

That still remains a key question in this project. My sense is that there are ideas out there that do allow you to preserve democratic values as long as people trust the system. Let’s say you don’t know anything about xyz issue—that is fine. You don’t need to make a decision, but if you know that there is a system out here whereby it is not corporations who are defining what policy is based on self-interested positions, but a randomly selected group of people who are going to be given the opportunity to really study the issue, and then come back with some recommendations, and those recommendations would go into what the law is. It doesn’t mean it is going to be perfect, but if you trust the process at least not to be corrupted, there are ways to preserve democracy and give people voice in a way that seems equitable but doesn’t necessarily have the shortcomings of our current system. I do believe there are innovations out there that can strike that balance while being good governments.

**Does artificial intelligence have a role in the future of governance?**

Our tolerance for human error is going to become lower and lower. Particularly as AI becomes more widespread. Every chance we get, we are replacing humans with technology because we think they are going to have better results and fewer mistakes. For the most part, that’s right. And as we move into a society were that is more and more the case, it is going to seem unethical if we know that the other alternative is—well, you put a human at the wheel, he falls asleep, people die. It seems like an ethical imperative. Similarly, I think at some point we will be dealing with parts of our government that become very technocratic and very much run by AI systems—this is already happening in our criminal justice and economic systems. We are not that far away from a campaign to vote an AI into a representative seat. It will start as a game, but this AI system will be so great that people will be like, ‘Why don’t we have this?’ It doesn’t break down, it doesn’t have affairs, it doesn’t fail us. It is not human, and that is great. This is where the questions we’re pursuing in the Future of Democracy program dovetail with the Transformations of the Human program.

MARA EAGLE. “AERIAL HISTORY - CANBERRA IN THE 50S, 60S AND 70S; MICRO POROSITY MEASUREMENTS; UNKNOWN” FROM THE SERIES ATLAS OF MAGNILOQUENT DIGITALIA.

**Dawn Nakagawa**

=================

_Executive Vice President and Co-Director of the Future of Democracy Program_

-----------------------------------------------------------------------------

Y**ou co-direct the Future of Democracy program, and you oversee a subset of that program concerned with “Renovating Democracy for the Digital Age.” How did these programs develop?**

Two years ago, we launched the Renovating Democracy for the Digital Age project. The point of that work was really to look at how technology was presenting both challenges and new opportunities for democratic institutions. What we ended up spending a lot of time on, and trying to convince policy makers of, was the fact that social media and the way people were absorbing information and this sort of massive transformation of the information ecosystem was going to have a really big impact on democracy.

Nobody was listening to us at the time. It was fall of 2016, before the election. We were talking to people and they were like..._really_? They weren’t that interested. And these were heads of countries. We were telling them, Yes, this is going to be an issue. It’s already happening. There is a decline in political discourse, we’re becoming more polarized.

The echo-chamber effect, the extreme views that get a lot of play because they go viral, the misinformation, how Twitter has become basically a big verbal slugfest... we were showing them all of this, and we were trying to explain to them that we thought all of those things are going to have a big impact, and are effecting the health of our democracy. Anyway, 2016 happened, Trump’s on Twitter, the Cambridge Analytica and Facebook scandal erupts, and suddenly people are taking our calls. So it was in parallel with the Future of Democracy and the Great Transformations articulation of our mission that this work was underway, and it was clear that that work would fit into what we would call “Future of Democracy.”

**Has there been an effort to adapt or strengthen our institutions in light of these revelations, and the mainstream acceptance of the role of digital media in democracy?**

The conversation around social media has come a long way. There area lot of people doing good work on it. We are more interested in, not so much the specific things that are happening on social media, but how people are absorbing information and how their social expectations have changed, and what that means. What the implications are for political systems or politics in general. The other big question is, how do we reform democratic institutions to adapt to this new era of information and communication? Because it’s here, and it’s not going away. How do we push policy makers towards that?

My personal belief is that we just don’t have the right institutional infrastructure for the time we’re living in. Going back to the Magna Carta, every time we see a power transition in a society, new institutional infrastructures are developed. Well, power has shifted dramatically in the last 30 years. This is a massive evolution. Hierarchies do not work anymore, but we are still living under the institutional infrastructure of those hierarchies, and as much as we try to adapt to them, it’s not working. Humans don’t change, not without a massive crisis. Given Trump, and the Supreme Court, and the absolute outrage on the left, I think we might be headed for one.

**How do you move from thinking about these things to enacting concrete change?**

Concrete change—that is the long-term hope. First we have to understand the dynamics of power in a networked society. What are the sources of legitimacy and credibility? How can you create structures that engender trust from constituents? The first step is understanding those types of things, the first principles. Once we understand that, we can start to figure out how we design new institutions to reflect those things so that people do trust them.

I think the next step would be, if we think there are right answers, what are ways that we could move in that direction? Maybe it’s baby steps, doing a pilot project somewhere. Presumably and hopefully, the product of this is both an understanding of how society has shifted, and what the principles of trust are going to be in the 21st century as we continue to evolve down this trajectory. And meanwhile, those solutions need to preserve our democratic values.

**Can you provide an example of someone, or maybe a country or an organization, that has made strides in creating this sense of trust between citizens and leaders?**

Look at the Five Star Movement in Italy. It’s a crazy platform, but what they are trying to do is avoid elite capture, or the perception of elite capture. It was started by a popular comedian and a web strategist. They advocate for electronic voting, and sort of crowdsource legislative ideas. Those things bubble up and policy makers take them on. Representatives don’t actually hold a seat, the person who occupies the seat will change based on their expertise. The same thing with the Pirate Party and liquid democracy—they are changing the dynamics of representation so that nobody holds the seat for a very long time, and power is transferred based on some sort of meritocratic principle. Their specific policies and whether or not they’re going to be able to do this successfully long term are less of interest than the innovations they’re making within a democratic framework. It is a different kind of representative democracy. They’re doing it because it is more trustworthy: everybody gets a turn or a say, and everyone gets to participate in some way.